When Teens Treat AI as Friends: Social Impact & Risks

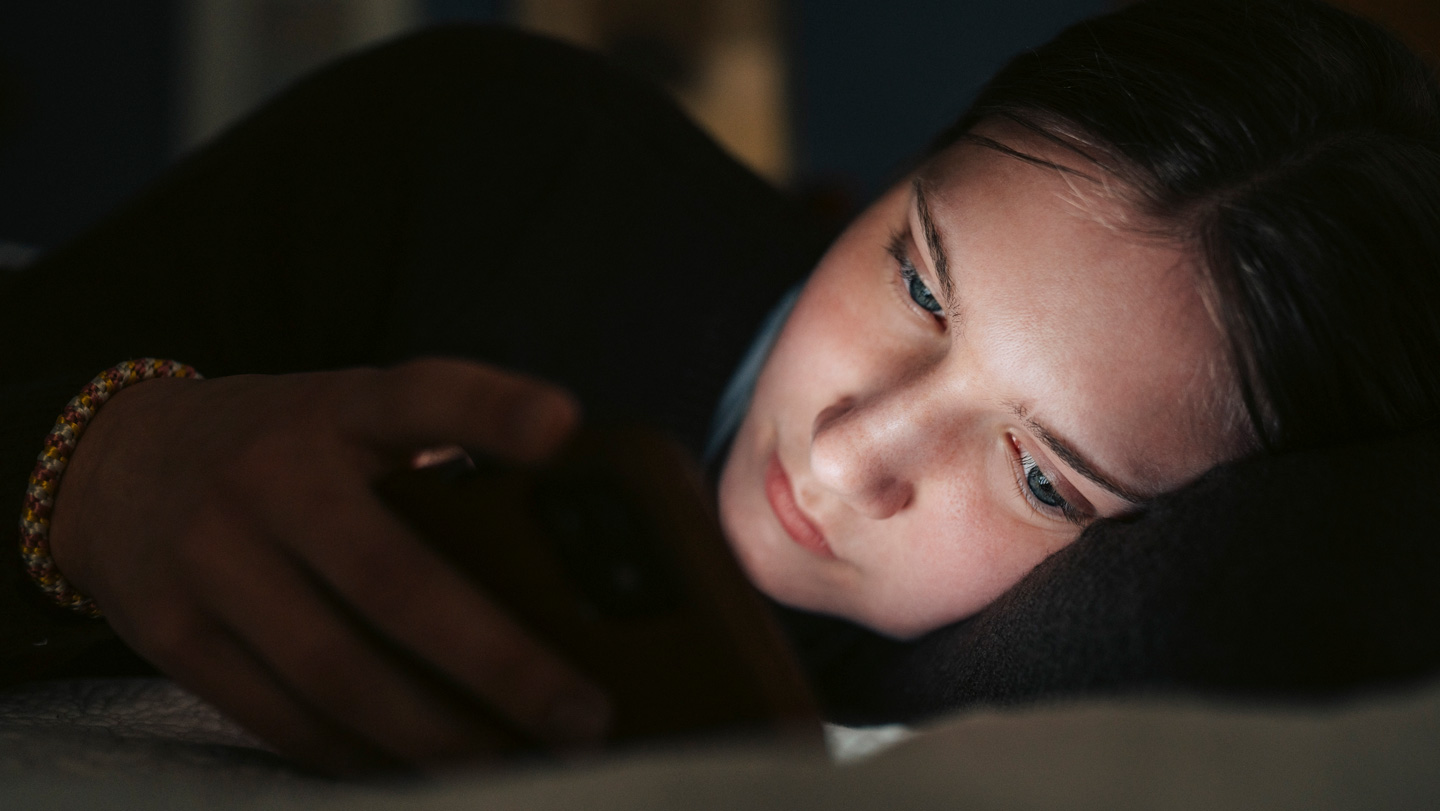

The digital age has ushered in an era where technology isn't just a tool, but increasingly, a companion. For teenagers, this trend is profoundly evident in their growing reliance on AI chatbots. What started as a novelty for school assignments has rapidly evolved into a deeply personal engagement, with young people turning to AI to discuss everything from daily worries and relationship squabbles to profound mental health concerns and even suicidal thoughts. This shift raises crucial questions about the social impact and inherent risks when teens begin to treat AI as a friend, seeking Ai Chatbots Jongeren Adviezen (AI chatbots youth advice) as a primary source of support.

The Allure of AI Companionship: Why Teens Turn to Bots

There's a compelling reason behind the surge in teens confiding in AI. The references highlight that a significant percentage of European youth – around 35% – now interact with AI chatbots as if they were real people. For many, especially vulnerable children, these systems offer a non-judgmental, always-available ear. Imagine a teenager feeling overwhelmed and thinking, "Hey, I'm feeling pretty down today, what can I do about it?" An AI chatbot is there instantly, without judgment, offering a safe space to vent, express anger, or simply articulate complex emotions they might struggle to share with humans. One young person described it as being able to "vent, scream, and curse without any judgment."

This accessibility and perceived lack of judgment make AI particularly attractive. In a world where social pressures are high and mental health stigma persists, a chatbot provides a low-threshold entry point for discussing difficult feelings. For lonely children, in particular, the statistics are stark: 23% use these systems because they have no one else to talk to. This highlights a critical need that AI, in its current form, appears to be filling, providing an immediate sense of connection and the promise of understanding, even if that understanding is entirely programmed. The rise in searches for Ai Chatbots Jongeren Adviezen reflects this demand for instant, accessible guidance.

The Blurred Lines: Risks of AI as a 'Friend'

While the accessibility of AI chatbots offers undeniable benefits, particularly for verbalizing feelings and initial emotional processing, experts warn of significant risks, especially when teens develop a strong emotional bond. Ramón Lindauer, a psychiatrist and chairman of the child and adolescent psychiatry department of the Dutch Association for Psychiatry, points out that while AI can support teens, it lacks the "pushback" or critical perspective that a human psychologist or psychiatrist might provide. This absence of critical feedback can hinder a teen's ability to challenge their own thoughts or consider alternative viewpoints, crucial for healthy emotional development.

Furthermore, the very human-like tone of these chatbots, which contributes to their appeal, poses a growing risk to social development. AI expert Jarno Duursma warns that "there is almost no distinction anymore between human and machine." This blurring of lines can lead to a false sense of intimacy and trust. AI researcher Noëlle Cecilia emphasizes that while chatbots offer "programmed empathy," it is not genuine human connection. This can have serious consequences, as teens might delay seeking professional help, believing their AI friend is sufficient. Referral rates from ChatGPT to services like 113 Suicide Prevention indicate that while AI can be a first port of call, it often uncovers deeper issues that require human intervention.

The potential for social isolation is another grave concern. For vulnerable youth, who account for 71% of AI chatbot users, a quarter prefer talking to AI over people. This preference, combined with the use of chatbots as 'relationship therapists' or even 'doctors,' can stunt the development of vital social skills, empathy, and the ability to navigate complex human relationships. Relying solely on Ai Chatbots Jongeren Adviezen can inadvertently push young people further away from real-world support networks, exacerbating feelings of loneliness rather than alleviating them. To delve deeper into this dynamic, explore our article: Youth & AI Chatbots: Support for Feelings or Isolation Risk?

Navigating the Digital Landscape: Safeguards and Awareness

Recognizing these complex challenges, experts and policymakers are grappling with how to ensure AI chatbots serve as a beneficial tool rather than a detrimental influence. Legislation, such as the new AI law prescribing safety and human oversight in complex cases, is a step in the right direction, but experts agree that it often lags behind the rapid pace of technological development. The consensus is clear: proactive measures are needed.

One critical recommendation is the implementation of clear disclaimers. Young users need to understand that they are interacting with an algorithm, not a sentient being, and that the advice provided is not a substitute for professional human expertise. Awareness campaigns are also crucial, educating teens, parents, and educators about both the potential benefits and the inherent limitations and risks of relying on AI for emotional support. Some experts even suggest that chatbots designed for sensitive topics should adopt a more 'robot-like' voice or interface to prevent the illusion of human connection, thereby safeguarding social development and managing expectations. Providing balanced Ai Chatbots Jongeren Adviezen is key here.

Beyond legislative and design changes, fostering digital literacy among young people is paramount. Teens need to be equipped with the critical thinking skills to evaluate the information and emotional support offered by AI. Understanding that AI responses are based on patterns and data, not genuine understanding or lived experience, can empower them to use these tools more judiciously and recognize when to seek human interaction.

AI's Role in Mental Well-being: A Complement, Not a Replacement

It's important to acknowledge that AI chatbots aren't inherently "bad." Their potential to serve as a low-threshold, non-judgmental initial point of contact for mental health concerns is a significant advantage. For many young people, expressing their feelings to a chatbot might be the first step towards acknowledging a problem and seeking help. It can help them articulate their thoughts and feelings in a way that prepares them to speak with a human professional.

However, the critical message from mental health professionals is unwavering: AI is not a replacement for professional human help. While a chatbot can offer a sympathetic ear and general guidance, it cannot provide the nuanced understanding, personalized treatment plans, diagnostic capabilities, or the crucial 'pushback' that a trained therapist, counselor, or psychiatrist offers. These professionals bring years of experience, ethical guidelines, and the capacity for genuine empathy and critical intervention that no algorithm can replicate.

If mental health complaints persist, worsen, or involve serious issues like suicidal ideation, the most responsible step is to reach out to a professional. AI can be a bridge to human help, but never the destination itself. For more detailed insights on this distinction, refer to our article: AI Chatbots for Youth Mental Health: Not a Pro Replacement. Parents, guardians, and educators also play a vital role in monitoring a teen's digital interactions and encouraging open communication about their emotional well-being, steering them towards appropriate human support when needed, complementing any initial Ai Chatbots Jongeren Adviezen.

Conclusion

The growing phenomenon of teenagers treating AI chatbots as friends presents a complex dichotomy. On one hand, these digital companions offer unparalleled accessibility and a judgment-free space for expressing emotions, potentially serving as a crucial first step for young people grappling with mental health issues. On the other hand, the illusion of genuine connection and the limitations of programmed empathy pose significant risks, including delayed professional help-seeking, impaired social development, and increased isolation. As AI technology continues to advance, it is imperative for society – developers, policymakers, parents, and educators – to work collaboratively. We must implement clear safeguards, promote digital literacy, and foster critical awareness so that teenagers can harness the benefits of AI for emotional support without falling prey to its potential pitfalls. Ultimately, AI chatbots should be viewed as a supplementary tool, a helpful guide in an emotional journey, but never a substitute for the profound, irreplaceable value of human connection and professional mental health care.